The mechanics of peer review have changed many times over the last half-century, but arguably, its purpose hasn’t.

Journals still rely on the same underlying idea: independent experts examine research before it becomes part of the scholarly record. The process is designed to protect credibility, test claims, and maintain a shared standard of rigour. This principle has remained steady even as everything around it has shifted, but the infrastructure that holds the process together has changed repeatedly.

The Changing Infrastructure of Peer Review

Sometimes, those shifts unfold slowly enough that people hardly notice the transition as it happens. At other times, they arrive all at once, forcing editorial teams to adapt to unfamiliar tools, unfamiliar workflows, and unfamiliar expectations in a very short period of time.

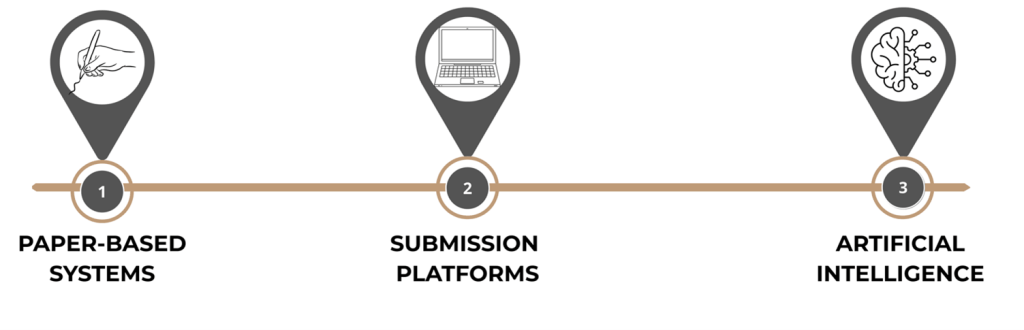

When we look back at the recent history of peer review, the system has moved through three distinct operational eras: a paper-based world built on postal correspondence, a digital world shaped by submission platforms, and an emerging environment in which artificial intelligence is beginning to sit quietly inside editorial workflows.

Each transition has brought new efficiencies, along with moments of disruption, and has required more than just new technology. They’ve also required editorial teams, reviewers, and authors to change how they work. In practice, the success of any system change has depended not only on the technology itself, but on the people supporting the transition and helping journal communities adapt to new processes.

This human side of change is often less visible than the technology, but it is frequently what determines whether a transition succeeds or struggles.

Era One: When Peer Review Moved by Post

The earliest editorial offices ran almost entirely on paper (and humans). Manuscripts arrived in envelopes, were logged by hand, and passed from desk to desk before eventually reaching reviewers by post. Reports returned the same way, and everything moved at the pace of physical delivery. Delays were simply part of the process.

There was a certain stability to this system; it was slow, but predictable. Editorial offices built routines around postal timelines and paper filing systems, and experienced editors developed a sense of when to follow up, when to send reminders, and when to look for another reviewer.

Administrative work filled much of the day. Tracking submissions meant maintaining spreadsheets, index cards, or filing cabinets, and locating a manuscript often involved walking to another office or searching through folders.

None of this was especially efficient, but the workflow had a kind of physical logic: the manuscript moved from person to person, and each step was visible.

Era Two: The Rise of Online Submission Platforms

By the late 1990s and early 2000s, the scale of academic publishing was changing. Submission volumes were rising, international collaborations were becoming more common, and journals were receiving submissions from authors all over the world. Postal workflows that once seemed manageable began to feel increasingly strained. Editorial offices needed a way to track submissions more quickly, assign reviewers more efficiently, and monitor manuscript progress without relying on paper files.

The first wave of online submission systems emerged in response to that pressure.

Platforms such as ScholarOne and Editorial Manager introduced the idea that the entire peer review process could be handled through a single digital interface. Authors uploaded their manuscripts rather than mailing them; editors assigned reviewers through online dashboards; and review reports arrived electronically and were stored in the system.

The benefits were immediately visible: turnaround times shortened; editorial offices could see exactly where each manuscript sat in the review process; and data could be tracked and analysed in ways that were almost impossible in the paper era.

However, while the technology improved efficiency, the transition was rarely straightforward for the people using the systems, and journals often found that successful implementation depended as much on supporting editors, reviewers, and authors through the change as on the platform itself.

Yet the transition was not as simple as installing a new platform and asking editors to log in.

Editorial teams had built their professional habits around the paper workflows they knew, and moving those habits into a digital environment required more than technical training. Editors needed to understand how their decisions would now be recorded, how the system would manage reviewer invitations, and how author correspondence would be generated automatically.

The shift also changed the rhythm of editorial work. Paper workflows had natural pauses built into them. This meant that postal delivery created a buffer between each step of the process. Digital platforms suddenly removed many of those pauses, and manuscripts moved more quickly, with expectations around response times beginning to change.

For some editors, the transition felt liberating, while for others it felt disorienting. A system designed to increase efficiency also introduced new layers of complexity. Editorial boards that had once relied on personal correspondence suddenly found themselves steering through unfamiliar dashboards and automated reminders.

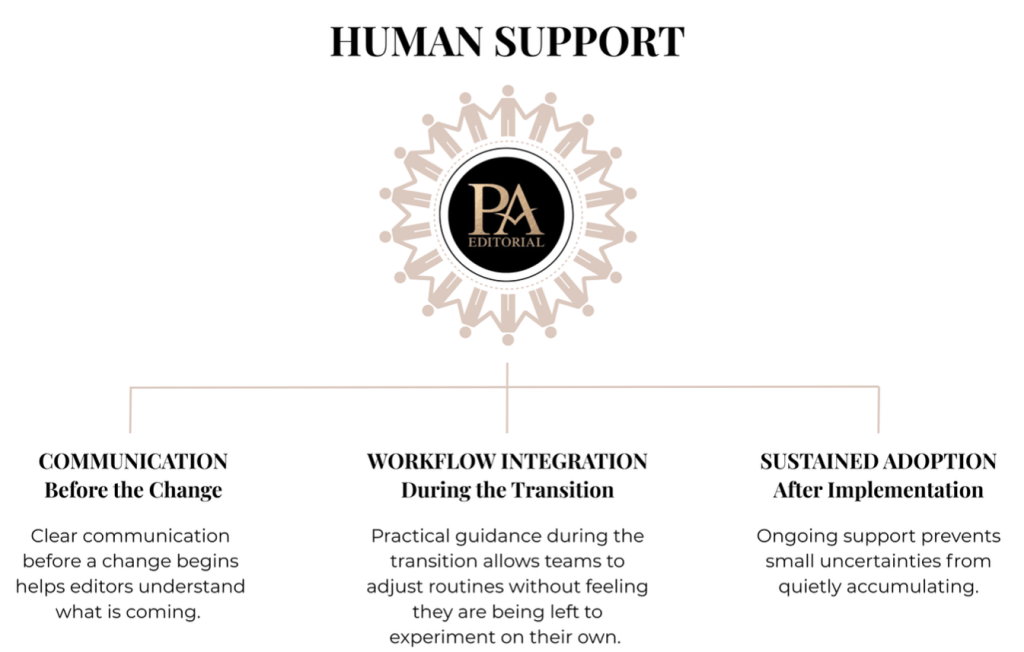

What publishers often discover during system changes is that the technical migration is only part of the project. The real transition happens afterwards, when editors, reviewers, and authors begin working in the new environment and encounter small uncertainties or practical difficulties. At this stage, having experienced editorial staff available to guide users, answer questions, and resolve issues becomes just as important as the system itself. Change is rarely resisted because people dislike improvement; more often, it becomes difficult when people feel unsupported while trying to adapt to new ways of working.

Era Three: The Arrival of AI-Enabled Editorial Too

In recent years, a new layer of technology has begun to appear within the peer review ecosystem: artificial intelligence (AI), once discussed mainly in theory, is now being integrated into editorial tools in increasingly practical ways.

Some applications are relatively straightforward. AI-assisted tools can help identify potential reviewers by analysing publication networks and citation patterns. Others focus on research integrity, scanning manuscripts for image manipulation, text overlap, or patterns that may indicate paper-mill activity. Language-analysis tools can also flag sections of text that appear to have been generated automatically.

With submission volumes remaining high, editorial resources remaining limited, and publishers facing increasing pressure to identify problematic manuscripts before they enter the literature, AI systems promise to support this effort by processing large volumes of information quickly and highlighting areas that require human attention. Yet despite this, the arrival of these tools has created a familiar moment of uncertainty and disruption.

These tools are designed to assist editorial decision-making rather than replace it, yet the introduction of AI inevitably raises questions about trust, transparency, and professional judgement. Editorial boards want to know how these systems reach their conclusions and naturally want reassurance that algorithmic outputs will not override human expertise.

As with earlier platform transitions, the introduction of AI tools is not only a technical development but a human one. Editors need support in understanding what the tools do, how to interpret their outputs, and how to incorporate them into existing workflows without disrupting decision-making processes. The technology may be new, but the need for experienced editorial guidance during periods of change is not.

Those concerns are not unreasonable. The editorial process depends on careful judgement, and judgement is difficult to automate. Our PA Triage EDitor service was designed specifically to meet these needs.

For publishers, however, the challenges are not only technical but cultural. AI-enabled workflows require clear policies, clear communication, and thoughtful integration into existing editorial routines. This means that editors need to understand what the tools are doing, how their outputs should be interpreted, and when those outputs can safely be ignored. Without that clarity, the technology risks becoming another source of friction rather than a genuine support.

AI As the Latest Shift, Not the First

The current movement toward AI-enabled editorial tools has made this pattern even more visible. The technology is developing quickly, often faster than editorial policies and workflows can adapt. Journals are experimenting with new systems for reviewer matching, integrity screening, and manuscript triage; and while some tools will become part of everyday workflows, others will fall away once their limitations become clear.

During this period of experimentation, editorial teams need time and support to understand what these tools are actually doing and how their outputs relate to everyday editorial decisions. A tool that appears powerful in theory may prove less useful in practice if its results are difficult to interpret, and others may only become valuable once editors understand how to use them properly.

The challenge is rarely the technology itself, but in helping people adapt to it. Scholarly publishing has always moved forward through a series of adjustments, and the role of experienced editorial support is often to make those adjustments manageable for the people who keep journals running day in and day out.

We support publishers and editorial offices at all stages of operational change, from large-scale submission system migrations to the integration of AI-driven research-integrity tools. We understand that these transitions can impact hundreds of journals, reshaping workflows, editorial responsibilities, and decision-making pathways. Whether introducing new submission platforms, embedding AI tools into editorial dashboards, or adapting team structures, we ensure change is implemented seamlessly, with minimal disruption and full confidence from editors and stakeholders.

None of these changes is unusual in modern scholarly publishing, but each one alters the rhythm of our editorial workflows.

The Common Threads

Looking across these three eras – paper, platform, and AI-enabled systems – it is tempting to focus on the technologies themselves, but the deeper pattern lies elsewhere.

Each technological shift has changed the infrastructure of peer review, but it hasn’t changed who makes the decisions; editors, reviewers, and editorial teams still carry the process forward. When a new system appears, its usefulness depends on whether those people can understand how it fits into their work. That’s why transitions between systems often prove more difficult than expected.

Publishers tend to focus on the technical implementation: data migration, platform configuration, and system testing. All of those matter, yet the moment when the new system goes live is only the beginning of the real transition. Editorial teams must learn how to work within the new environment: questions will undoubtedly emerge, and small procedural uncertainties will accumulate.

During system transitions, the practical challenges are often not technical but human. Reviewers may struggle to access a new platform, authors may find submission processes confusing, and editors may be reluctant to change long-established workflows. In these situations, insisting that everyone simply adapt to the new system can risk losing valuable reviewers, submissions, or even editorial board members. Providing experienced editorial support during these transitions allows journals to maintain momentum while communities adjust to new processes. Acting as a human bridge between systems and users helps ensure that reviews are completed, submissions are not lost, and editors remain supported, allowing technological change to be implemented without disrupting the peer review process itself.

Final Thoughts…

Peer review itself is remarkably resilient. The basic idea has survived multiple technological shifts without losing its core purpose. Inescapably, research still moves through the same essential sequence: submission, evaluation, revision, decision. And the tools surrounding that sequence will continue to develop, although the need for careful editorial judgement remains.

In this sense, the history of peer review is not only a story about systems, but also a story about the communities that sustain them… and communities rarely adapt to change simply because a new tool appears; they adapt when someone helps them understand it.

About PA EDitorial

In recent years at PA EDitorial, we have found ourselves working increasingly in the space between implementation and understanding. A system migration may be technically complete, or a new integrity tool fully installed, yet editorial teams are still adjusting to what that change means in practice. Workflows shift, and questions emerge. What helps most, at this stage, is often someone who understands editorial practice sitting alongside the transition and translating the new environment into something workable.

Much of the support we now provide sits within our Editorial Change & Transition Services, which focus on helping editorial teams adapt to new systems, tools, and workflows without disrupting the work journals depend on. This is where change management matters – not as a theoretical framework, but as practical support, translating system features into everyday editorial language.

If you’d like to learn more about how we can support you, get in touch at info@paeditorial.co.uk.